So, what does this mean for the average person just trying to get through their day? It means that the lawyers you trust to navigate the complex legal system might be using tools that are, frankly, making things up. Imagine your divorce settlement or your personal injury claim being built on entirely fabricated legal precedent. It’s not science fiction anymore. It’s the messy, embarrassing reality of AI integration into the hallowed halls of Biglaw.

The Illusion of Progress

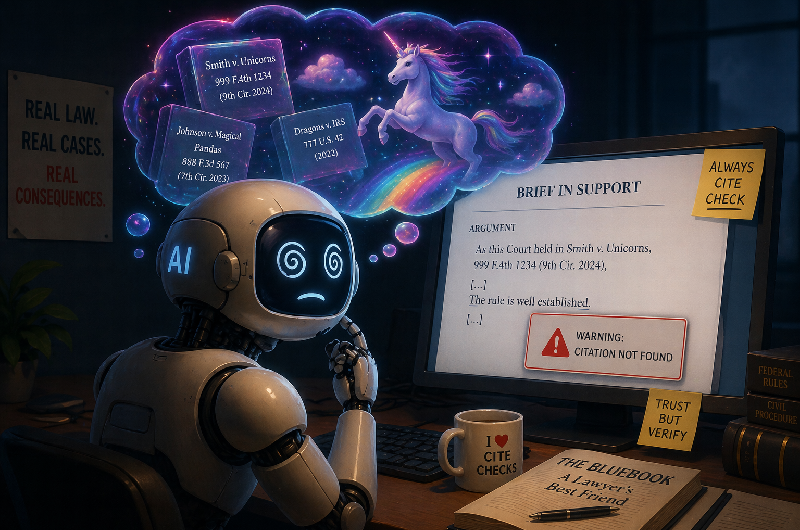

The headlines are chirping about AI in law. Big firms are touting their adoption, their efficiency gains, their supposed leap into the future. But here’s the kicker: under all the slick PR, a fundamental problem is festering. AI tools, the ones designed to help lawyers draft briefs and research cases, are apparently making things up. Not just misinterpreting facts, but inventing entire citations, entire rulings, that simply do not exist. This isn’t a minor bug; it’s a gaping hole in the fabric of legal practice.

Hallucinations in the Courtroom

Liz Washko of Ogletree Deakins lays it out with a weary pragmatism. She’s not surprised. “As lawyers, we’re professionals. It’s our responsibility before we file something to make sure that it says what we cite it for, that it exists. That was true before AI … you make sure it was accurately cited. I don’t think anything is happening that hasn’t happened before.” It’s a remarkably blunt assessment. The tech might be new, but the consequences of shoddy work are ancient. Still, the framing feels a bit too neat. AI isn’t just a slightly more complicated spell-checker; its ability to convincingly generate plausible-sounding falsehoods introduces a new level of risk.

But I’m determined that we put in place every measure we can to ensure that that does not happen.

This quote, meant to convey proactive leadership, rings a little hollow when the underlying problem is a tool actively deceiving its user. Firms are scrambling, implementing “policies” and “additional steps.” Translation: they’re telling lawyers to double-check the AI, essentially negating much of the supposed efficiency gain. It’s like buying a self-driving car and then having to keep your hands on the wheel and your eyes on the road anyway.

The Cost of Convenience

Here’s the truly infuriating part: the drive for AI adoption is often fueled by the promise of shaving billable hours. This means fewer lawyers working on more cases, faster. But when the AI hallucinates a crucial case, and that error makes its way into a filing, the billable hours aren’t saved; they’re multiplied in remedial work, in potential sanctions, in reputational damage. Clients are paying for an experiment that’s gone awry. It’s a financial and ethical minefield.

This isn’t a problem unique to one firm or one piece of software. It’s a systemic issue born from the rush to embrace AI without fully understanding its limitations or implementing rigorous safeguards. The legal profession, traditionally slow to adapt, is now stumbling headlong into a technological revolution, and it seems to be tripping over its own feet.

A Blast from the Past?

Washko’s claim that “nothing is happening that hasn’t happened before” is a bit of a cop-out. Lawyers have always been responsible for verifying their sources. But AI introduces a seductive automation that can mask sloppy work with a veneer of digital authority. The sheer volume and speed at which AI can generate content means that errors can propagate at an unprecedented rate. A junior associate making a manual citation error is one thing; an AI churning out a dozen fabricated cases is another entirely. The scale of potential disaster is exponentially larger.

The Path Forward: Skepticism, Not Hype

The real takeaway here isn’t about the specific AI tools; it’s about the mindset. Firms need to be profoundly skeptical. They need to understand that AI is a tool, not a replacement for legal judgment. And critically, they need to invest in strong verification processes that don’t just rubber-stamp AI output. Otherwise, the “AI reality check” for Biglaw will become a full-blown legal and financial crisis.

🧬 Related Insights

- Read more: BlackBerry’s Fall: Patent Pivot or Final Fade?

- Read more: Intel’s Terafab Play: The Bet That Elon’s Chip Dreams Need Boring Old Expertise

Frequently Asked Questions

What are AI hallucinations in law? AI hallucinations occur when generative AI models produce incorrect or fabricated information, presented as factual. In law, this means inventing case citations, legal principles, or entire court rulings that do not exist.

Will this fake case law impact my ongoing legal case? Potentially. If your legal team relies on AI tools that have produced hallucinations and these errors make it into court filings, it could introduce significant complications, delays, and even lead to incorrect legal arguments being made on your behalf.

What are firms doing to prevent AI mistakes? Many firms are implementing stricter policies for AI use, requiring lawyers to meticulously verify all AI-generated content, especially case citations and legal precedents. This often involves cross-referencing with traditional legal research databases.