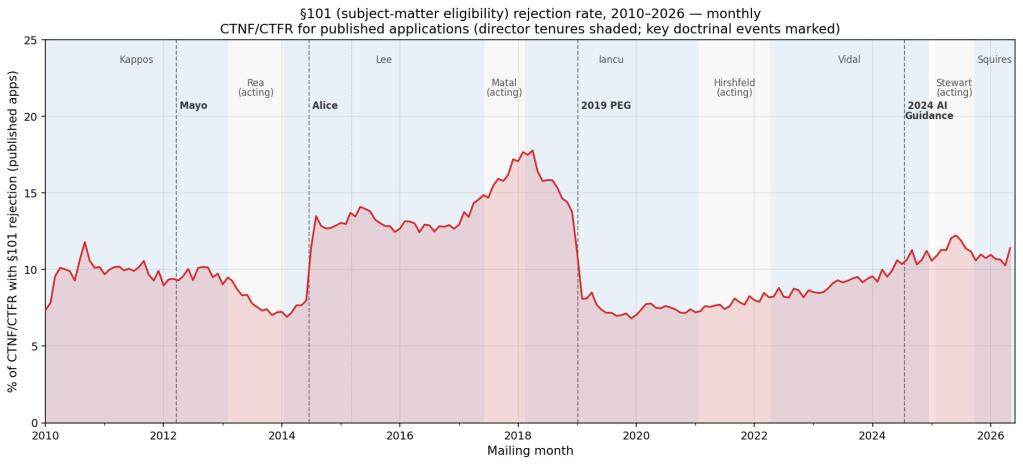

Sixteen years. That’s how long we’ve been wrestling with 35 U.S.C. § 101, that nebulous patent eligibility statute that feels less like law and more like interpretive dance for patent examiners. The Supreme Court dropped its seismic Mayo decision way back in 2012, then doubled down with Alice in 2014, and ever since, the patent world has been trying to figure out what qualifies as patentable subject matter, especially when it comes to software and, yes, AI. We’re talking about millions of rejections, mind you.

But here’s the thing: courts set the rules, sure, but it’s the administrators—the examiners—who actually enforce them. And Dennis Crouch, bless his data-crunching heart, has been digging into millions of USPTO office actions going back to 2010. He’s not just glancing; he’s using a custom classifier to pinpoint those sweet, sweet § 101 rejections. The headline finding? It’s not the obvious march toward total exclusion that many have feared.

The Data Doesn’t Lie (But It Might Confuse)

Crouch’s analysis is the kind of granular, boots-on-the-ground look that cuts through the punditry and the breathless press releases from Big Tech’s patent divisions. We’ve all heard the dirge: AI inventions are unpatentable, plain and simple. A narrative pushed, often by those who want to hobble competitors or scoop up the low-hanging patent fruit themselves. But when you wade through actual examination data, the picture gets messier, and frankly, more interesting.

This isn’t just academic navel-gazing. The implications for anyone trying to protect novel AI algorithms, machine learning models, or any software-driven innovation are enormous. If examiners aren’t uniformly applying the § 101 hammer with the ferocity often depicted, then where is the friction?

Who’s Actually Making Money Here?

This is where my reporter’s cynicism kicks in, and why this data is so vital. Everyone says they want clear patent laws. But who benefits when the rules are vague? The lawyers, for starters, churning out briefs and appeals. The companies with armies of in-house counsel who can afford to play the long game of litigation. And, crucially, the examiners themselves, tasked with a near-impossible job under immense pressure.

Crouch’s data, kept under lock and key for now, hints that the examiners’ behavior hasn’t been the monolithic anti-AI tidal wave we might have assumed. If the Mayo-Alice framework, on its own, only does “some of the work,” what’s doing the rest? It’s the human element, the examiner’s interpretation, and, dare I say, their own evolving understanding—or perhaps, their workload priorities.

“Part of the story is that the legal framework only does some of the work. In any system, we also have to look at how that law is administered. For me, this means patent examination data.”

Think about it. The USPTO is swamped. Examiners have quotas. They’re dealing with technology that’s evolving at warp speed, far faster than any statute or court ruling can keep up. So, they develop heuristics, shortcuts, and patterns based on their training and the guidance they receive. Crouch’s analysis suggests these patterns, when aggregated across millions of cases, tell a story of administration, not just law.

Why Does §101 Continue to Plague AI Patents?

If the data is showing nuance, why the persistent gloom and doom surrounding AI patents? It’s partly the high-profile rejections, the landmark cases that grab headlines and shape perception. It’s also the inherent difficulty in patenting abstract ideas or natural phenomena, which many AI concepts can arguably brush up against. And let’s not forget the sheer volume of applications. For every brilliant, truly novel AI breakthrough, there are thousands of incremental improvements or applications of existing tech that examiners correctly flag as ineligible.

But the real sting, and the reason Crouch’s subscriber-only data is so provocative, is that the administration of § 101 might be more complex—and less predictable—than a simple “AI is unpatentable” decree would suggest. It raises the uncomfortable question: are we being sold a narrative that benefits certain players, while obscuring the actual operational realities within the USPTO?

This isn’t the end of the § 101 debate, not by a long shot. But it’s a crucial reminder that the devil, as always, is in the details. And for AI inventors, those details are buried in millions of office actions. Time for the rest of us to get a look.

🧬 Related Insights

- Read more: Anthropic’s Claude Bans OpenClaw Creator — Then Backs Down in Hours

- Read more: EU’s Digital Fairness Act: EFF Spotlights Privacy, User Sovereignty

Frequently Asked Questions

What is 35 U.S.C. § 101?

This statute outlines the basic requirements for patentability, specifically defining what constitutes patentable subject matter, including processes, machines, manufactures, and compositions of matter.

How do Mayo and Alice affect patent eligibility?

The Mayo and Alice decisions established a two-step framework to determine if a claim is directed to an abstract idea, law of nature, or natural phenomenon. If it is, the analysis proceeds to step two, which asks whether the claim includes an inventive concept sufficient to transform the abstract idea into a patent-eligible application.

Are AI inventions patentable?

Patent eligibility for AI inventions is complex and depends heavily on how the invention is claimed and whether it integrates an inventive concept beyond a mere abstract idea or natural phenomenon. While challenging, patentability is not impossible, with specific data points from examiner actions offering more insight.